Qatar Airways is breaking new ground with its “AI Adventure” campaign which leverages the power of Generative AI.

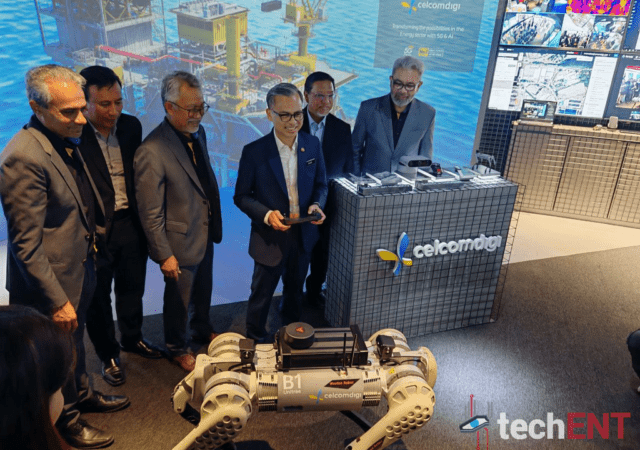

CelcomDigi Launches All New AI Experience Center

CelcomDigi launches its new AI experience Center (AiX) in CelcomDigi Hub in Subang Jaya’s Hi-Tech Park. The new center looks to spur innovation and collaboration.

Sustainability Cannot Exist Without Innovation, & Vice Versa – Here’s Why

Dell Technologies weighs in on why innovation and sustainability must go hand-in-hand in light of the recent 2030 Asia Pacific SDG Progress Report.

Axrail Collaborates with AWS & Phison in Launching Southeast Asia’s First Gen AI Lab

Axrail launches Southeast Asia’s first Generative AI (Gen AI) Lab in collaboration with Amazon Web Services (AWS) and Phison.

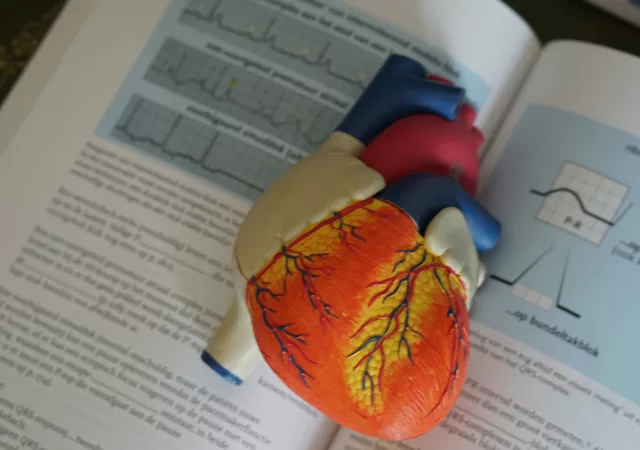

Scientists Just Used AI to Discover New Genes Linked to Heart Disease

Scientists at the Icahn School of Medicine at Mount Sinai have discovered 17 rare gene variants linked to heart disease using AI.

Bringing the Open Source Way to AI

Can we open source AI? Ashesh Badani, VP and Chief Product Officer at Red Hat weighs in on the big question of open source AI.

DAMO Academy and the World Health Organization Collaborate to Push Medical AI Boundaries for Developing Countries

DAMO Academy and the World Health Organisation (WHO) collaborate to advance the application of AI in healthcare across the Western Pacific region.

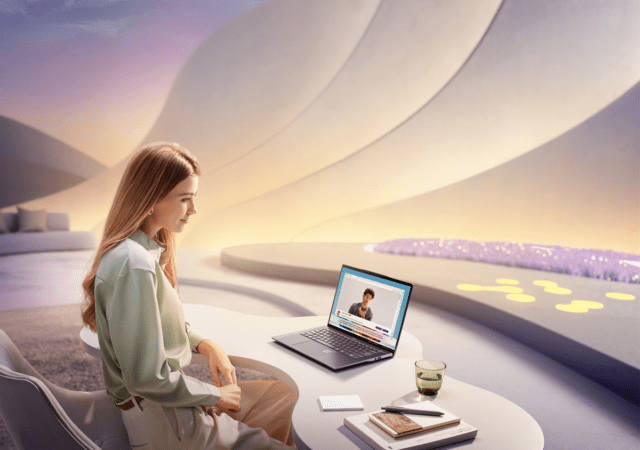

Chromebook Plus Gets an AI Infusion with Gemini

Google recently unveiled the Chromebook Plus, a new laptop class promising a more powerful and intelligent user experience with the help of artificial intelligence (AI). Let’s delve into the new features coming to Chromebook Plus and how it leverages AI…

Lenovo Deploys an AI Engine Streamlines Sustainable IT Solutions for Businesses with LISSA

Lenovo announces LISSA an AI model to help companies achieve their sustainability goals with systematic insights and actionable data.

Acer’s First Copilot+ PC is the Acer the Swift 14 AI

Acer supercharges its Swift line up with the Snapdragon X powered Swift 14 AI Copilot+ PC.

![BurgundyDream 16 9 English (1)[1]](https://techent.tv/wp-content/uploads/2024/09/BurgundyDream_16-9_English-11-640x450.webp)