ASUS ZenBook Duo (2024) First Look | techENT

[VIDEO] Samsung Galaxy S24 Series First Look

[VIDEO] OPPO Reno 11 Pro Review

First Look at the Huawei MatePad Pro 13.2 & MateBook D16

OPPO Find N3 Flip First Look & Specs Rundown

OPPO Find N3 First Look & Specs Rundown

Unboxing the Lenovo Ideapad V14 (ASMR Edition)

Hands On with the Nothing Phone (2)

[SPONSORED] Make Classrooms a JOI to Learn in with the JOI Smartboard

Enhance classroom learning with the JOI Smartboard. Bridge the gap between traditional teaching methods and modern technology to create an interactive learning experience.

AI and Environmental Sustainability – A Symbiotic Relationship

Explore the game-changing potential of AI and its impact on industries and economies. Discover how data processing and data centre performance are crucial for leveraging this transformative technology.

Huawei Watch Fit 3 Available for Blind Pre-order in Malaysia

Huawei opens blind pre-orders for the Watch Fit 3 in Malaysia giving consumers time to get their hands on the latest wearable ahead of the rest.

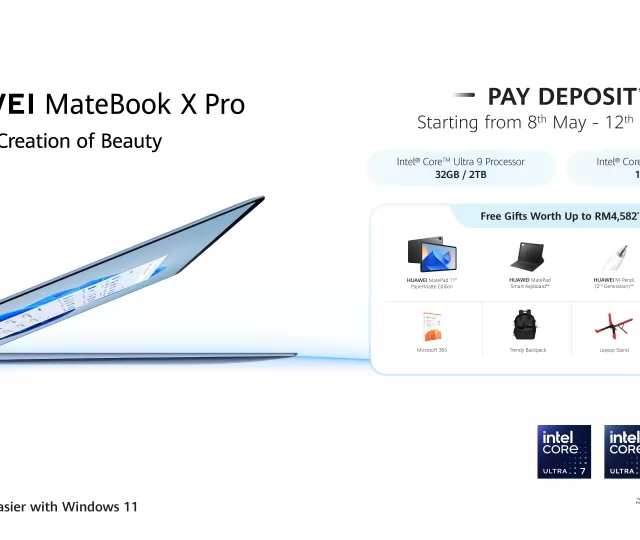

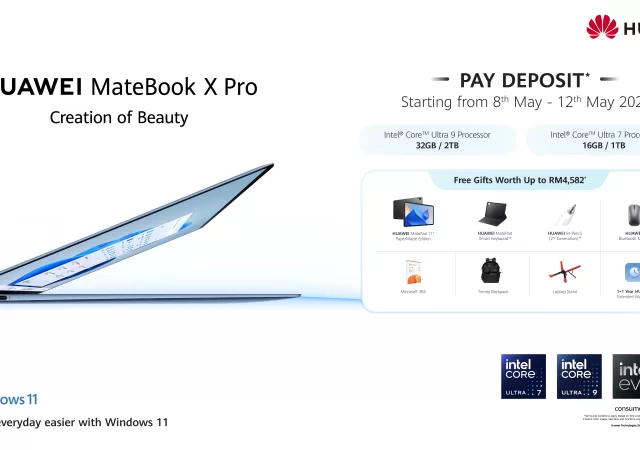

Huawei’s MateBook X Pro Pre-orders Kick Off in Malaysia

Huawei kicks off the bling pre-orders for the upcoming Matebook X Pro that’s poised to launch on May 13, 2024.

Synology DS224+ In-Depth Review: Plug and Play NAS Systems Can’t Get Any Easier

The Synology DS224+ provides a solution to an increasing problem in a world where we generate so much data. But is it worth investing in? Should you get one?

Apple M4 Chip Makes Debut with New iPad Pro

The new iPad Pro comes with Apple’s most powerful SoC yet. The M4 Chip has a 10-core CPU, a 10-core GPU and its most powerful Neural Engine.

New iPad Pro Pushes Boundaries with Ultra Retina XDR Display & Apple M4 Silicon

Apple’s new iPad Pro debuts with new M4 chip and Apple’s Ultra Retina XDR display with a bevvy of new accessories.

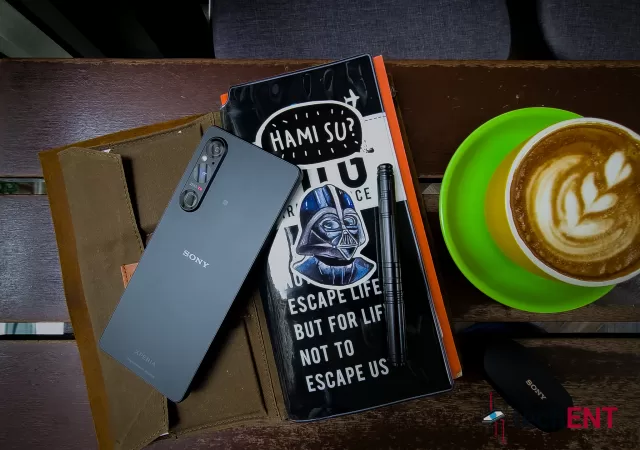

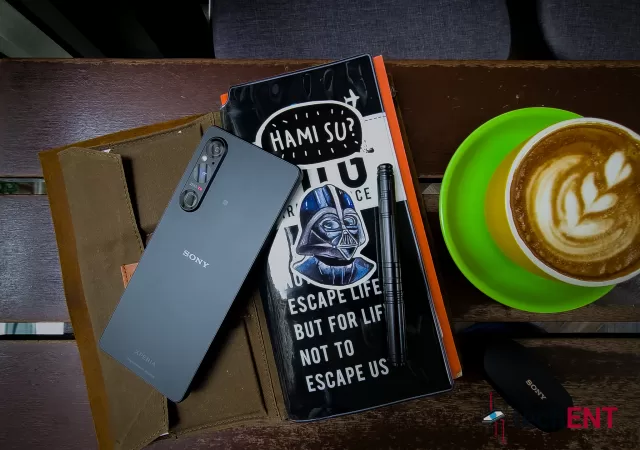

The Sony Xperia 1 V In-Depth Review – Maybe the Best MYR 6,399 You Can Spend

Sony’s Xperia 1 V is a thing of understated beauty to us. We really like it, but we also think that it is not made for everyone.

HP Pavilion Plus 14 AMD 7840U Review

Great for general work (typing and browsing), photo editing, casual video editing, light to moderate gaming, traveling, and watching videos, the HP Pavilion Plus 14 AMD 7840U doesn’t breaking the bank. Perfect for those who move about a lot for work and students, especially when on sale.